|

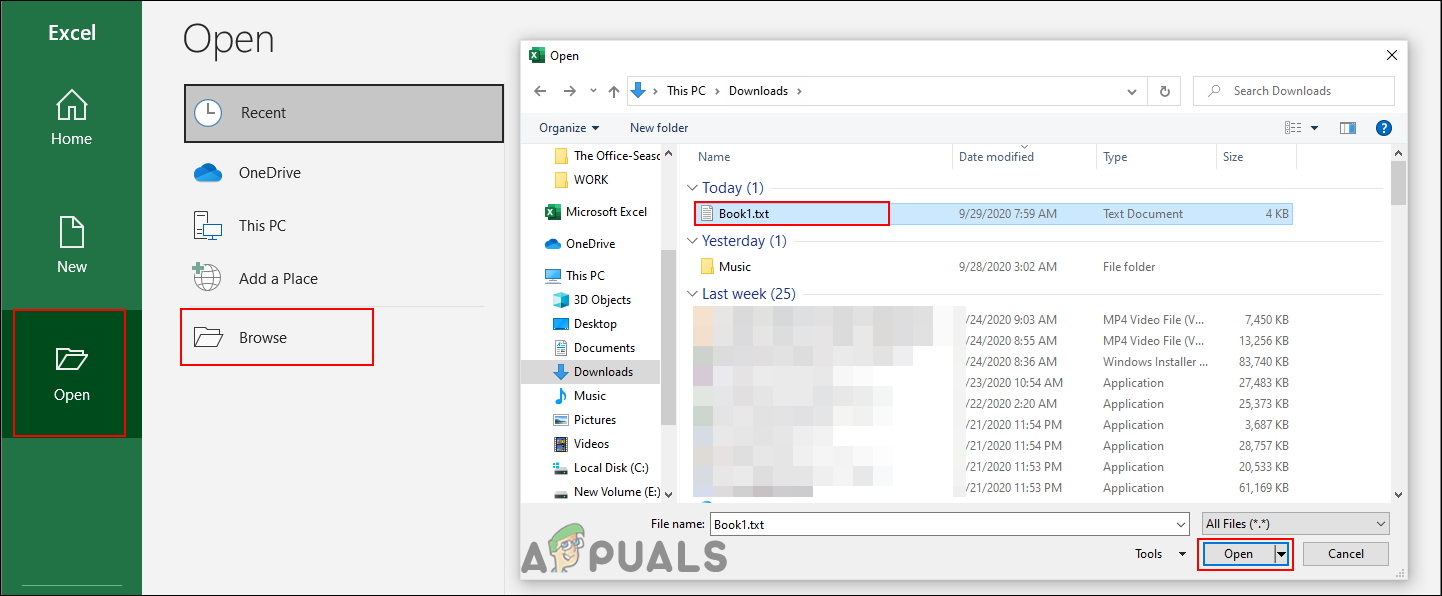

I took your sample string, and made a sample file by multiplying that string by 100 million (something like your_string*1e8.) to get a test file that is 31GB.įollowing suggestion of chunking, I made the following, which processes that 31GB file in ~2 minutes, with a peak RAM usage depending on the chunk size. I'd be happy to read any suggestions you may have, thanks in advance! Is there a way or methodology I can use to convert VERY large. Results were similar, not a huge improvement. With open(path.replace('.txt', '.csv'), "w") as outfile: I tried using the CSV library, but the results were similar, I attempted putting everything into a list, and afterward, write it over to a CSV: import csv This takes about 35 seconds to process, is a file of 300MB, I can accept that type of performance, but I'm trying to do the same for a way much larger file which size is 35GB and it produces a MemoryError message. Print("Execution time in seconds: ",(end - start)) # We read everything in columns with the separator "~"ĭf = pd.DataFrame(, columns = )ĭf.to_csv(path.replace('.txt', '.csv'), index = None) # We replace undesired strings and introduce a breakline.Ĭontent_filtered = "\n").replace("'", "") # We set the path where our file is located and read it

csv, one of them was using CSV library, but since I like Panda's a lot, I used the following: import pandas as pd txt file that looks like this (let's name it "myfile.txt"):Ģ8807644'~'0'~'Maun Holdings First Bank of Hills Freedom Bank of First Fund Federal Credit have tried a couple of ways to convert this.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed